n <- 1

m <- 450

M <- 1 ## Huber threshold

p <- 0.1 ## Fraction of responses with sign flipped

## Generate problem data

set.seed(1289)

beta_true <- 5 * matrix(stats::rnorm(n), nrow = n)

X <- matrix(stats::rnorm(m * n), nrow = m, ncol = n)

y_true <- X %*% beta_true

eps <- matrix(stats::rnorm(m), nrow = m)Huber Regression

Introduction

Huber regression (Huber 1964) is a regression technique that is robust to outliers. The idea is to use a different loss function rather than the traditional least-squares; we solve

\[\begin{array}{ll} \underset{\beta}{\mbox{minimize}} & \sum_{i=1}^m \phi(y_i - x_i^T\beta) \end{array}\]for variable \(\beta \in {\mathbf R}^n\), where the loss \(\phi\) is the Huber function with threshold \(M > 0\), \[ \phi(u) = \begin{cases} u^2 & \mbox{if } |u| \leq M \\ 2Mu - M^2 & \mbox{if } |u| > M. \end{cases} \]

This function is identical to the least squares penalty for small residuals, but on large residuals, its penalty is lower and increases linearly rather than quadratically. It is thus more forgiving of outliers.

Example

We generate some problem data.

We will randomly flip the sign of some responses to illustrate the robustness.

factor <- 2*stats::rbinom(m, size = 1, prob = 1-p) - 1

y <- factor * y_true + epsWe can solve this problem both using ordinary least squares and huber regression to compare.

beta <- Variable(n)

rel_err <- cvxr_norm(beta - beta_true, "F") / norm(beta_true, "F")

## OLS

obj <- sum((y - X %*% beta)^2)

prob <- Problem(Minimize(obj))

result <- psolve(prob)

check_solver_status(prob)

beta_ols <- value(beta)

err_ols <- value(rel_err)

## Solve Huber regression problem

obj <- sum(CVXR::huber(y - X %*% beta, M))

prob <- Problem(Minimize(obj))

result <- psolve(prob)

check_solver_status(prob)

beta_hub <- value(beta)

err_hub <- value(rel_err)Finally, we also solve the OLS problem assuming we know the flipped signs.

## Solve ordinary least squares assuming sign flips known

obj <- sum((y - factor*(X %*% beta))^2)

prob <- Problem(Minimize(obj))

result <- psolve(prob)

check_solver_status(prob)

beta_prs <- value(beta)

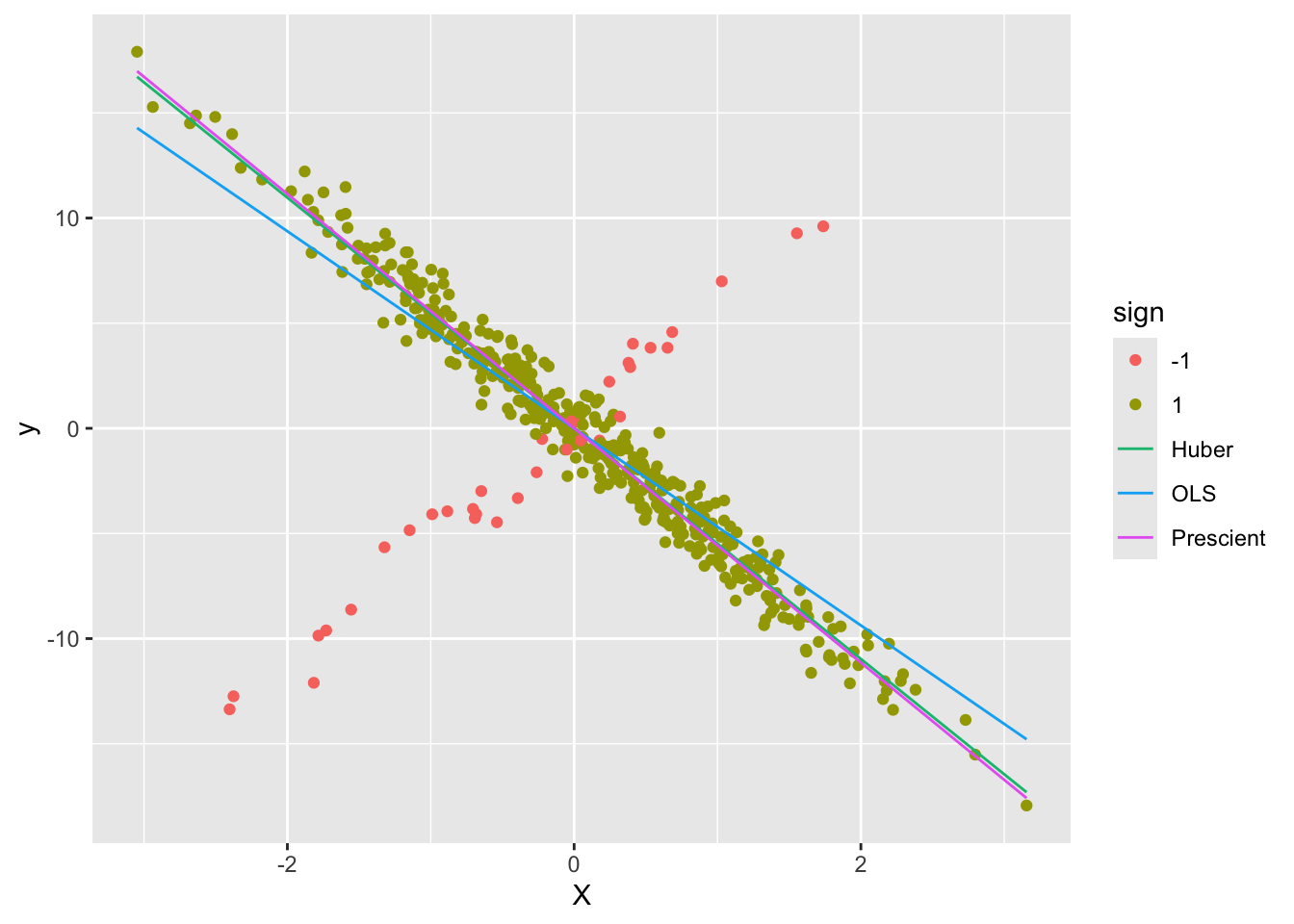

err_prs <- value(rel_err)We can now plot the fit against the measured responses.

d1 <- data.frame(X = X, y = y, sign = as.factor(factor))

d2 <- data.frame(X = rbind(X, X, X),

yHat = rbind(X %*% beta_ols,

X %*% beta_hub,

X %*% beta_prs),

Estimate = c(rep("OLS", m),

rep("Huber", m),

rep("Prescient", m)))

ggplot() +

geom_point(data = d1, mapping = aes(x = X, y = y, color = sign)) +

geom_line(data = d2, mapping = aes(x = X, y = yHat, color = Estimate))

As can be seen, the Huber line is closer to the prescient line.

Session Info

R version 4.5.3 (2026-03-11)

Platform: aarch64-apple-darwin20

Running under: macOS Tahoe 26.3.1

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.5-arm64/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.5-arm64/Resources/lib/libRlapack.dylib; LAPACK version 3.12.1

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

time zone: America/Los_Angeles

tzcode source: internal

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] ggplot2_4.0.2 CVXR_1.8.2

loaded via a namespace (and not attached):

[1] gmp_0.7-5.1 generics_0.1.4 clarabel_0.11.2 slam_0.1-55

[5] lattice_0.22-9 digest_0.6.39 magrittr_2.0.4 evaluate_1.0.5

[9] grid_4.5.3 RColorBrewer_1.1-3 fastmap_1.2.0 rprojroot_2.1.1

[13] jsonlite_2.0.0 Matrix_1.7-5 ECOSolveR_0.6.1 backports_1.5.1

[17] scs_3.2.7 Rmosek_11.1.1 xpress_9.8.1 scales_1.4.0

[21] codetools_0.2-20 cli_3.6.5 rlang_1.1.7 Rglpk_0.6-5.1

[25] withr_3.0.2 yaml_2.3.12 otel_0.2.0 tools_4.5.3

[29] osqp_1.0.0 Rcplex_0.3-8 checkmate_2.3.4 scip_1.10.0-3

[33] dplyr_1.2.0 here_1.0.2 gurobi_13.0-1 vctrs_0.7.2

[37] R6_2.6.1 lifecycle_1.0.5 htmlwidgets_1.6.4 pkgconfig_2.0.3

[41] cccp_0.3-3 pillar_1.11.1 gtable_0.3.6 glue_1.8.0

[45] Rcpp_1.1.1 xfun_0.57 tibble_3.3.1 tidyselect_1.2.1

[49] knitr_1.51 dichromat_2.0-0.1 highs_1.12.0-3 farver_2.1.2

[53] htmltools_0.5.9 labeling_0.4.3 rmarkdown_2.31 piqp_0.6.2

[57] compiler_4.5.3 S7_0.2.1